Introduction

In my previous post I talked about why good document organization matters for cognitive systems and I outlined a few of the challenges of organizing scanned documents. In this post, I will cover some potential solutions for organizing while you digitize. Recall that the core issue is that scanned documents often do not include useful metadata, including document type and date, at scanning time, unless you take explicit action.

Solution 1: Manual filename labelling

A surefire but labor-intensive approach is to encode all of the relevant information into the filename as you scan each document. Scan documents to Acme_Receipt_20170112.pdf or Receipts/ACME/20170112.pdf. This solution is quite simple and may suffice for small document batches, even though it requires scanning one document at a time, and it solves for both document dates and types.

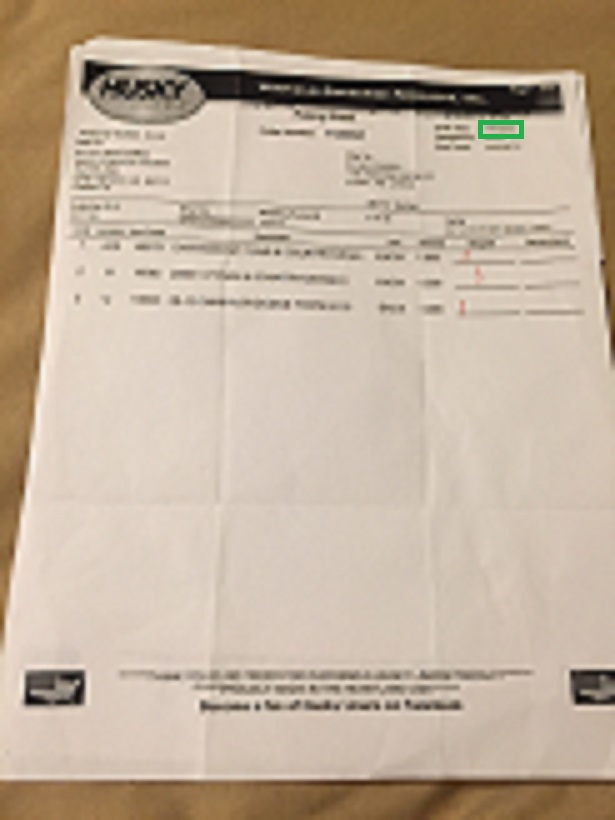

Solution 2: Zone-based OCR (aka template-based OCR)

Most commercial OCR engines allow you to train a simple scanning model. You can tell the model to look at certain coordinates and extract text in those coordinates to a structured data field. For instance, you can draw a rectangle around the pixels from (600, 100) to (650, 100) and extract the text from that rectangle into a ‘Date’ field. This approach works well if you have one, or a very small number, of document types that are completely consistent (such as, the date always occurs in the same spot). Since each document type requires its own model you need to sort the documents before scanning, and you probably don’t want to spend time building too many scanning models. This approach only solves document dates; your sorting approach needs to solve document types.

Solution 3: Document classification with machine learning

If you have a handful of document types, and a large total number of documents, it makes sense to throw some machine learning at the problem. You can train a classification model to look at your document contents and sort them into correct types. This approach requires you to label some documents by hand into “ground truth” (at least 10 per type, preferably 50 per type) to train the model. You can use a document text classifier on OCR’d document contents, or if your documents are one page you can try an image classifier as well. This approach requires some manual review – your machine learning model will never be perfect – but it helps sort large numbers of documents quickly. This approach only solves document types; you still need to solve document dates.

Solution 4: Heuristic type detection

If your document types are very distinct from one another you can use simple heuristics on the document content to sort them. If I am sorting personal receipts, the presence of a key phrase lets me know the document type. For instance, any document containing the phrase “SomeCorp Inc” goes into the “SomeCorp Receipt” type. This approach only solves for document types.

Solution 5: Heuristic date detection

When you are armed with the document type, you often have a clue how the date may be expressed. Potential rules include “receipt dates are always the first date seen in a document” or “the document date is the date that occurs most frequently in a document” (ie, in the footer on every page). You can also build rules using the context before and after a date, such as “the document date from ‘Effective Date:’ context, if not found use ‘Date:’ context, if not found use first date in document”. This approach works best if the document type is known ahead of time so that the document type can be included in the heuristic, however the document type is not strictly required especially if your documents come from a common domain.

Conclusion

When scanning a large number of documents, it is important to have a good scheme for organizing the documents by type and date so that you can mine them for data and insight later. There are multiple approaches you can use to extract document type and date, each with pros and cons depending on the number and variety of documents and types, and you can mix and match these approaches to build an optimal solution.

1 Comment