Introduction

Chat bots are a powerful way to put a natural language interface in front of your applications and services, to help users easily and naturally get what they need. In this post I will walk through a sample Watson Conversation Service application and call out places where you will want to extend in the future. This is intended as a “my first chatbot primer” that prepares you for even more exciting applications.

Setting up the example

Let us pretend we are building a chatbot for a small retail operation in your town. As chatbot novices, we want to support questions on two topics: the hours and locations of our stores. We have two stores (one on Elm and one on Maple), and when possible we want to provide store-specific responses. Thus our chatbot needs to understand two dimensions of a user question, 1) is it an “hours” or “location” question, and 2) is the question about a specific store.

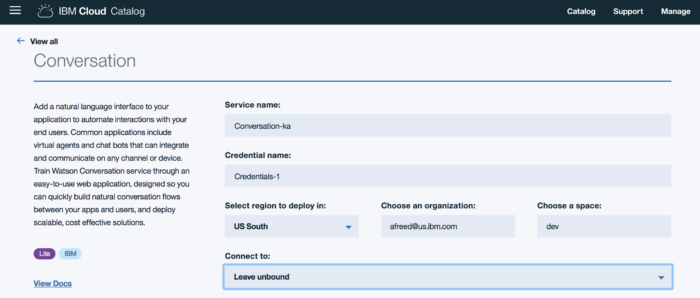

Step 0: Create an IBM Cloud account and Watson Conversation tile

Setting up an IBM Cloud account, adding Watson Conversation from the catalog, and creating the conversation workspace is well covered by other tutorials, I will omit it from my example and instead link to a nice Watson Conversation tutorial.

Step 1: Build the intents

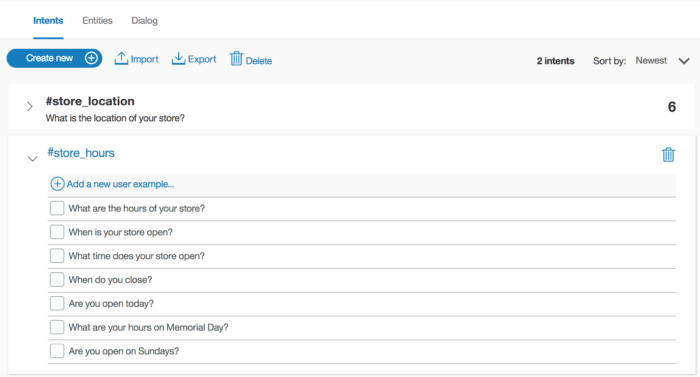

Whenever the user sends input to our bot (in the parlance, an “utterance”), we want to understand the user means (the “intent”). I like this terminology, literally what is the intent of our user? In order to understand user intents, we need to train the system with examples of each of the intents we intend to handle.

In the following diagram, you can see the two intents I created (#store_location and #store_hours), and the set of example questions for #store_hours. Note that the set is by no means exhaustive, this is a key feature of Watson technology, that the Conversation service can understand questions similar to (but not exactly matching) these questions. Note also the variation in questions, covering a reasonable subset of ways people ask about store hours.

Protip: Generally you should not invent sample questions, ideally you should get examples from real users in your system (from logs, mock interface, etc). Your training data needs to be representative of the data it will receive at runtime.

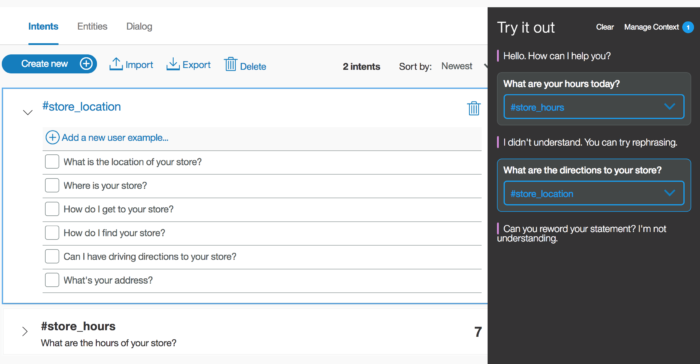

Watson Conversation service requires a minimum of five examples per intent. This is impressively low, remember machine learning requires a lot of data, but five is enough to train a minimally testable model. (Ten examples per intent provides good results for your time investment – more examples are useful but provide diminishing returns.) After entering my training data I opened the testing interface, waited a few seconds for training to finish, and started testing my model.

Note that the Conversation service correctly detects the intent of “What are your hours today?” as a “#store_hours” intent, even though it is not a direct match for any of the training example.

Additionally, we detect “What are the directions to your store?” as a “#store_location” intent. Again, this question does not directly match any training data – Watson Conversation decided which intent the question was closest to based on its training.

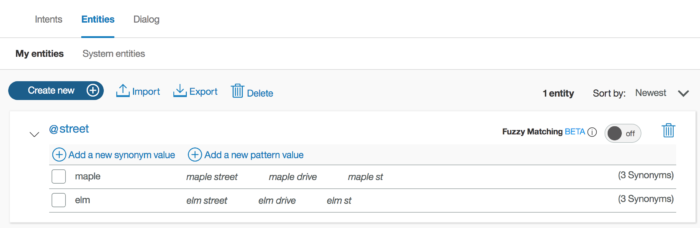

Step 2: Detect entities

In the setup we noted two retail store locations – one on Elm and one on Maple. We’d like to be able to detect if users are referring to a specific store in their questions, so that we can give a specific response. We refer to these specifics as entities. Note that entities are orthogonal to intents, after all you could ask about hours or locations for Elm or Maple. When we detect an entity, we know we have a specific question, without one we assume a general question.

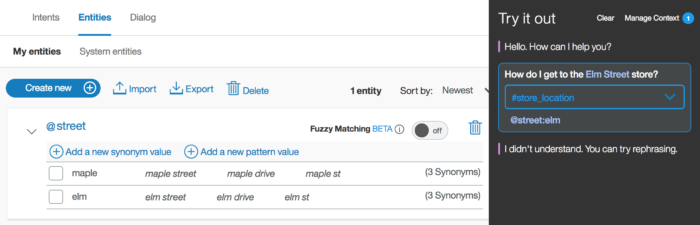

In the next diagram, you see the defined entities for our example. We declare one type of entity (@street) with two possible variations: elm and maple. We also note a few equivalence patterns – the phrases “elm street”, “elm drive”, and “elm st” are all “informationally equivalent” to “elm”.

We can go directly to the testing interface, adding entities does not require lengthy retraining. As you can see in the diagram below, the system detects “How do I get to the Elm Street” store as both “#store_location” intent and the “@street:elm” entity.

Our training data never included samples with Elm Street but our system was able to understand both dimensions of the user utterance.

Step 3: Build dialog flow

The last step in our example is to provide appropriate responses to user utterances. In our past few steps we only verified intent and entity detection. Let’s write up some more useful responses.

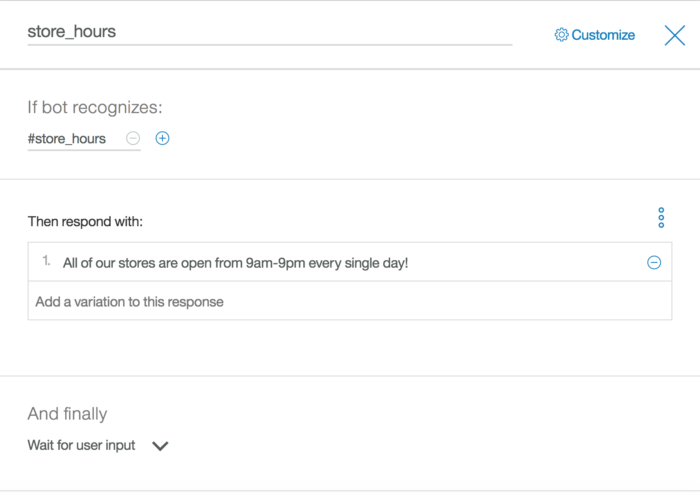

On the “Dialog” tab we create a new response node below the default “Welcome” node. The diagram below shows our store_hours node. In this node we define three things: 1) When does this node fire (on the “#store_hours” intent), 2) What is the response (or set of responses), and 3) What should the bot do next. For simplicity, we ignore any entities on hours questions.

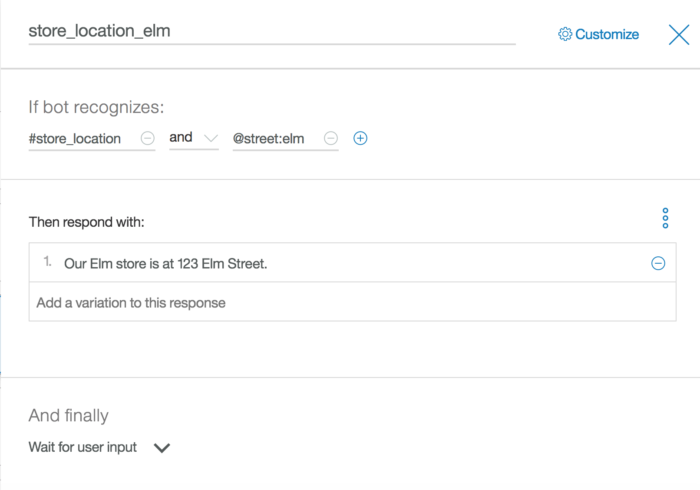

We are able to get more clever on our responses. For location questions, I defined three dialog nodes: one for Elm location questions, one for Maple location, and one for remaining location questions. Below is the definition of the store_location_elm node. The remaining dialog nodes look similar to the other diagrams.

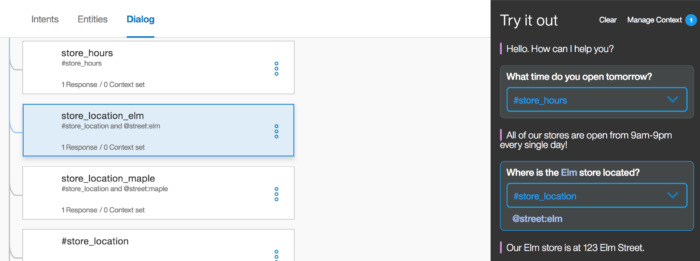

Now, the testing interface ties everything together. When it processes a user utterance, it displays information on which intent, entity, and dialog node fire.

Note in this response, the system even highlights the dialog node that was selected, further helping you understand how the system is working.

What should we do next?

I kept this example very small intentionally. This is a great way to learn new technology, but leaves you short of a full-featured application. Here are some things I might consider doing next:

- Add new training data (what if your user question is just “store hours?”) matching real user input.

- Add new intents to the bot, covering more user utterances.

- Ask clarifying questions (“Which store are you asking about?”) when entities are not detected.

- Integrate with Watson Discovery. Conversation is good about handling common (“short tail”) user intents, Discovery is good at the rarer (“long tail”). (You can’t write specific dialog for every possible user intent!)

- Integrate with backend or third-party systems (show the store locations on a map?)

Hopefully, this short example triggers your creativity on where to go next! Here are some additional examples of applications using Watson Conversation Service.

- An integration with Facebook Messenger: https://developer.ibm.com/recipes/tutorials/how-to-build-an-enhanced-chatbot-with-watson-conversation/

- An integration with NodeRed: https://developer.ibm.com/recipes/tutorials/how-to-create-a-watson-chatbot-on-nodered/

- Using Watson Conversation in your Android mobile app: https://developer.ibm.com/recipes/tutorials/making-an-android-mobile-app-that-uses-the-ibm-watson-conversation-service-as-a-chatbot/

- Integrating multiple services: Watson Conversation, NLU, and weather data: https://developer.ibm.com/dwblog/2017/chatbot-watson-conversation-natural-language-understanding-nlu/

Excellent job with introducing the topic, Andrew. For those looking to learn more may I also suggest my free course? https://cognitiveclass.ai/courses/how-to-build-a-chatbot/ It assumes no previous coding experience.

Sure, this course is a great next step!